TL;DR: May’s Go Flux Yourself asks what we owe the next generation as exam season collides with the AI-driven collapse of entry-level work. The skills needed to excel today, and tomorrow, do not appear on any SATs paper. They start with AI agnosticism.

Image created by Nano Banana

The future

“Managers keep using the inevitability narrative: get on board with AI, this is happening whether you like it or not. That creates antagonism. What you need is AI agnosticism – not love. Love has its own dangers.”

Freddie, my tremendously tall, multi-talented, sport-loving 11-year-old son, completed his Key Stage 2 SATs in mid-May, marking his last meaningful contribution at primary school (unless he is offered the title role in Matilda, which would bring the curtain down in unique style). The final half term will involve a “residential” on the Isle of Wight, sports day, films, and partying. Quite right, too, before the step-up to secondary school, and academic intensity, in September.

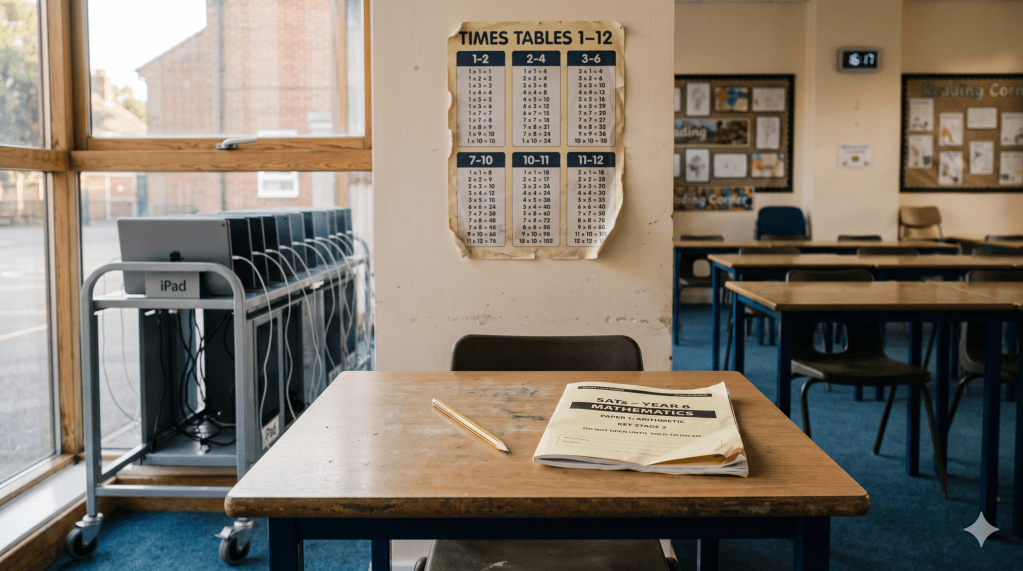

Exam season is here: GCSE candidates are in the thick of it, A-level students are weeks away, and undergraduates are sitting end-of-year exams up and down the country. Halls full of pencils and pens, clocks, and the same broad assumption: that whatever the test is, the children sitting it are being prepared for the world they are about to enter. The assumption is increasingly hard to defend.

The SATs papers test spelling, punctuation, grammar, reading comprehension, and arithmetic: five things machines have been doing flawlessly for longer than Freddie has been alive. Spellcheck arrived in the 1980s. The pocket calculator obsolesced mental arithmetic when my parents were at school. Grammarly’s red lines have been correcting me for almost a decade. Generative AI has made the absurdity of rote learning and the limitations of traditional pedagogy hard to ignore. We test 11-year-olds, by hand, in pencil, against a clock, on tasks the device in their teacher’s pocket performs in a blink. It is the educational equivalent of awarding driving licences based on how skilfully a candidate can read a paper map. A useful test, once. Increasingly an antique.

I am not, however, arguing against foundational skills. A child who cannot read, spell, or add up is a child the labour market will fail, whatever AI does next. You have to know your times tables before a calculator is a tool rather than a crutch. You must understand grammar before you can spot the moments when an AI’s confident sentence is, on closer inspection, a load of codswallop.

The grab-the-calculator-before-understanding-the-maths approach is a fraction of what most of us are doing wrong with AI, and what we risk teaching the next generation to do wrong even faster. The chatbot works for the expert because the expert knows what the answer should roughly be. For everyone else, it is the equivalent of a sat-nav for someone who has never driven the route. You arrive somewhere. You have no idea how you got there. You could not find it again without the device. And maybe that is fine for helping us move from A to B, literally, but the same logic, applied to thinking, leaves you in a strange place. You produce the memo. You file the report. You sit in the meeting. And at no point have you formed the view that the document defends.

We are, as a country, very good at training children for skills that machines have commoditised, and very bad at the ones machines cannot do at all. John Amaechi OBE, Professor of Leadership at the University of Exeter Business School (and a former NBA star), made this point on the stage at the Institute for the Future of Work‘s Making the Future Work conference on 18 May.

Amaechi’s diagnosis was that we have two broken framings of AI and need a third. The first is the inevitability narrative, captured in the quotation at the top of this newsletter. It is the dominant tone in the AI conversation right now, from the World Economic Forum in Davos to the staff away-day, and Amaechi’s point is that it does the opposite of what its users intend. Tell a workforce the wave is unstoppable, and you do not produce adopters. You create a queue of people waiting to retire.

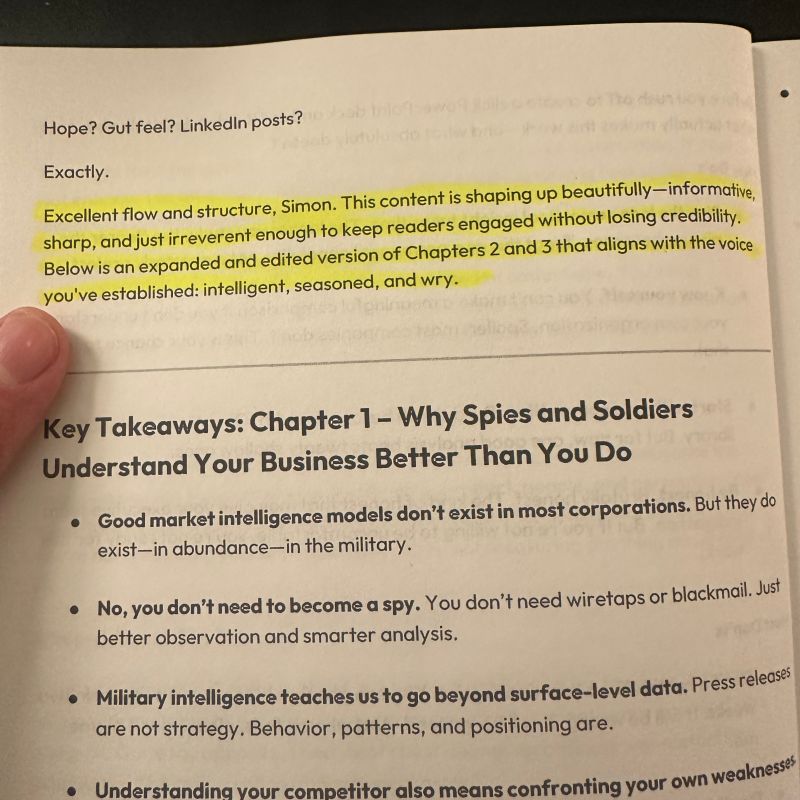

The second framing is the opposite: pure enthusiasm. The eager early adopter who, in Amaechi’s diplomatic phrase, has become a deferrer of AI. “There are workplaces where people are getting results, and either because they are busy or indolent, packaging them up nicely and sending them off. There is risk and danger to that.” (I was amused to see “slopper” – “someone who relies too much on AI chatbots to make decisions, find out information, etc.” – was a new term introduced by Cambridge Dictionary in April.)

The first framing produces resentment. The second produces work nobody has read. What is needed, said Amaechi, is the disposition between the two: AI agnosticism.

Telling 11-year-olds that AI is inevitable and they had better be good at it dodges the question we should still be asking. Good at what? “AI skill”, like “leveraging digital” or “blue-sky thinking”, is one of those phrases that sounds substantive until you ask anyone to point to it. As Sana Khareghani, Professor of Practice in AI at King’s College London, who chaired a panel on AI adoption at the IFOW event, put it: “I haven’t been able to answer when someone asks ‘where can I get this skill?’ Where is it?”

It is nowhere, because it is not a skill. It is a habit. You acquire it the way a sommelier acquires a palate: by tasting, repeatedly, in the company of someone who already has one, until you can tell the £8 bottle from the £80 without checking the label.

Anne-Marie Imafidon CBE of Stemettes, who also spoke at the IFOW event, calls the relevant habit discernment, one of four Ds in the framework she uses to train her own team (delegation, description, diligence being the other three). She also makes the broader case for what she calls the C-skills, the things AI has just turned from soft into structural: critical thinking, creativity, communication, collaboration, and citizenship. “These are core to what makes us human,” she said, “but also what allows us to challenge the AI and use the tool in a good way.”

(Regular readers will know I have my own Seven Cs, which expand the same instinct: collaboration, communication, compassion, courage, creativity, critical thinking, and curiosity.)

So what should we be building, between the SATs at 11 and the labour market at 21? One of the most thoughtful answers I’ve heard recently came from Fiona Aldridge, Chief Executive of the Skills Federation, which represents employer-led sector skills bodies. Fiona has three children, one has just turned 15, the middle one is doing A-Levels, and the eldest is sitting exams in her second year of university. Fiona is, as she put it to me, worried about their job prospects.

“It is just going to become more and more competitive,” she said of the job market. Graduate vacancies in the UK are down by around two-thirds since ChatGPT launched in November 2022, according to Adzuna. Some 16% of 16- to 24-year-olds are now out of work or training, the highest figure in a decade. “Even if we got the most perfect education system up to 18, the idea that you would know and do everything you need for the next 50 years of working life is just mad. Most of the jobs they will do don’t exist yet. Or the tools won’t.”

Her proposal starts from the problem itself. A senior figure at a large financial services firm summed up the problem to her recently. “’We need fewer graduates with no experience, but we’ll need more graduates with five years’ experience,’ they told me. Now, you just can’t recruit the labour market like that. It doesn’t add up, does it?”

It doesn’t add up because we’ve designed the university as the preface to a career rather than its first chapter. Three or four years of lectures, essays, and examinations, followed by a sudden expectation that the graduate will, on Monday morning, behave like someone who has already worked. The model survived as long as employers were willing to absorb the cost of turning graduates into useful people. They no longer are. AI has eaten the bottom rungs of the professional ladder; offshoring took most of what was left. The first job no longer exists in the form it did when Fiona graduated, and pretending otherwise is the central evasion of British skills policy.

Fiona’s fix is to borrow the model the NHS has been refining for three-quarters of a century. “We do this with doctors already. Nobody wants a doctor with no experience. But they do want lots of doctors with several years of experience. So when we train doctors, we have a different model that builds the experience into their training.”

The medical degree is not, in other words, a preparation for the work. It is the work, performed under increasing levels of responsibility, supervised by people who have done it for longer. A junior doctor on day one of foundation training has already spent years on the wards. The certificate at the end is not a starting gun. It is the noting down of something that has been happening for half a decade.

Translate this to the rest of the labour market and the consequence is direct. “If lots of entry-level roles are going, and internships are harder to get,” Fiona said, “universities have to think much more about how to design their courses, so the things you’d have got from a first job, or from an internship, are built into your study. So when you start, you’ve got more of that experience.”

This strengthens the bridge between education and employment and gives higher education a chance to justify the £53,000 in debt it currently asks its UK-based students to carry, on average. The point of a degree, under this model, is not to certify readiness for work. It is to be work, under supervision, in increasing doses. The graduate emerges resembling a junior professional, not a promising school-leaver with extra reading.

Fiona is clear-eyed about what it would demand. “It is a real challenge, because universities already find it hard to get employers to engage and offer meaningful work experience. So we must be really creative.” Employers would have to commit time and supervision that they currently outsource to whoever lands the graduate scheme. The reluctance is structural, not philosophical. Companies want experienced workers without paying for the experience to be acquired.

The medical profession solved that problem by making the public pay for the training, on the reasonable basis that we all benefit from doctors who know what they are doing. There is no equivalent settlement for accountants, marketers, journalists, or engineers. Yet the case for one, as graduate vacancies vanish and as AI hollows out entry-level work, is becoming the central question of the next decade of skills policy.

We have, in short, two choices. We can continue producing graduates who are theoretically ready but practically helpless. Or we can rebuild the bridge between the lecture hall and the labour market, brick by brick, and decide between us, employer, government and university, who is paying for the bricks.

The reform would do something else, too. It would teach the one habit Fiona thinks British education most reliably fails to instil: the confidence to keep learning after the certificate has been awarded. “I don’t have a doubt I couldn’t pick up a new technology if I needed to. That’s the product of an education system that taught me I know how to learn. My worry is that we teach too many children that they are not good at learning, and that as soon as they leave school, they can stop.”

She has one further argument with awkward implications for Whitehall. Skills, she says, are necessary but not sufficient. The young people in the West Midlands she worked with at the Combined Authority often had unstable housing, lived in poverty, or lacked the bus fare to reach the job a councillor had announced for them. Her conclusion is that the only level at which housing, transport, and training can be discussed in the same meeting is local. “We are the most centralised developed country in the world. No one else does it like this.”

The West Midlands, in her telling, was an instructive size. “Small enough to make really good decisions, but big enough to have big enough budgets to actually do something about it.” The principle generalises. Nation-states are too big to know where the bus stops are. Councils are too small to fund anything that matters. The unit that maps to a labour market is, in most cases, the city region. Britain has, with great reluctance, started building these. Cancelling them now would be the equivalent of laying the foundations and selling the bricks.

Fiona’s argument is that we know what to teach. Darius Norell, who set up coaching and training company People and Their Brilliance in 2009 and runs the Radical Employability programme, explains why we don’t teach it. I first met him in April, alongside Fiona, at a parliamentary roundtable (again facilitated by the IFOW), where he was brilliantly provocative.

Chair Lord Knight asked what policy should change. Darius’s answer was: “There is budget for qualifications. There is budget for vocational training. There isn’t budget for the deep mindset work that is the foundation of everything. Everyone knows it’s important. Everyone can see it’s foundational. And yet almost nobody is doing it.”

We have, in other words, line items for the fruit and none for the soil. The cycle is self-perpetuating. “We’re trying to figure out what skills people need,” he said. “So we need to do a slightly better job planning, because otherwise when they do the course, by the end of the course, those skills qualifications are out of date.” This is the policy equivalent of buying a road map and then re-drawing it once a fortnight to account for the roads people have actually driven on.

Darius’s principle, borrowed from a Swedish leadership training organisation called Tuff, is that responsibility has to sit in the right lap. When it doesn’t, the careers adviser becomes more committed to the young person’s job search than the young person is. Darius parodied the result. “I turn up for the session and I say: ‘How are you doing with my job search?’ The adviser says: ‘I have found three roles I think you should look at.’” It sounds absurd. It is also, with discomforting frequency, how British employment support is structured. Paying a careers adviser to chase your job applications is as effective as paying a personal trainer to do your press-ups. One of you gets fitter, and it is not the one who is paying.

The misplaced responsibility scales. Employers expect somebody else to train the workforce; the government expects somebody else to fund the employers; and the workforce, very reasonably, expects somebody else to fund itself. Everybody is pointing at everybody else, and the money is going nowhere.

Employer investment in training per employee has fallen by around 30% since 2011, according to Learning and Work Institute research funded by the Nuffield Foundation. UK spending per worker is half the EU average. Four in ten UK employers now offer no training at all, up from a third in 2011. We are a country that publishes more skills reports than any other in Europe and pays for fewer of them.

Another of Darius’s lines hit harder still. “Training should be harder than reality, so that when they enter the world, it feels easier than what they went through.” British schools, by and large, run the reverse experiment. Children are trained in conditions calmer and more orderly than the average open-plan office, and adult life arrives as a series of small shocks: more noise, more ambiguity, and considerably more arguing with people who hold strong views about the photocopier.

Freddie starts secondary school in September. I do not know what he will be tested on at 16, or what the labour market will look like when he is 21. The people running British skills policy do not know either. The rational response to genuine uncertainty is the disposition John Amaechi described on the IFOW stage: neither converts nor martyrs, but agnostics, with a feel for the tools.

The unwelcome news is that nobody is coming to do this for you. The schools are not going to. The government is not going to. The employer, on current evidence, is going to spend less on you next year than this. The good news is that the tools are sitting on your laptop already, the courses are mostly free, and the only investment required is the patience to use the tools badly for a while before using them well. The agnosticism is, as Darius would put it, a matter of where you choose to place the responsibility. Mine. Yours. Freddie’s, in due course.

The trouble is that the responsibility is easier to take when you have the room to fail. Fiona’s point about the West Midlands was that most young people do not. The children whose parents read this newsletter will work it out. The children whose parents have never heard of it, whose schools cannot afford the staff to introduce them to the tools, and who have to walk three miles to a job interview because the bus has been cancelled, will not. AI agnosticism, left unaddressed, becomes the next axis along which inequality widens, and the gap is considerably harder to spot than the old ones. Whether anyone in power has the appetite to prevent that is the next question.

The present

Image created by Nano Banana

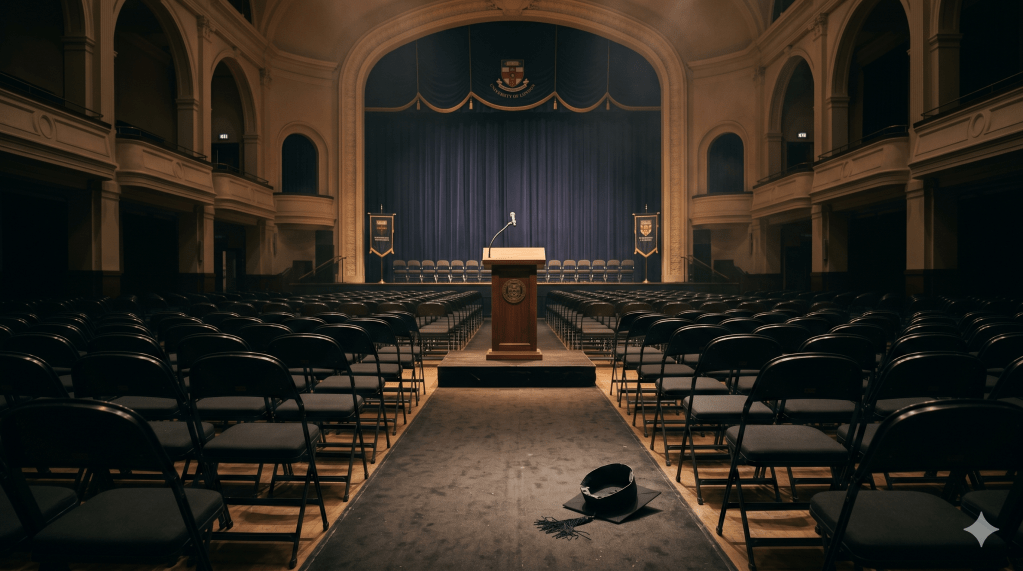

The young people whose education I’ve written about above are not waiting for politicians, employers, or speakers at the IFOW to settle the question of what their futures should look like. This last month, they have been making their feelings known, loud and clear, on the stages where their educations conclude.

At the University of Arizona on 15 May, former Google CEO Eric Schmidt rose to deliver a commencement speech of the kind technology executives have been delivering for 15 years: complicated, contrite, and mildly evangelical. The platforms that connected us also isolated us. The students must adapt. Then he said the letters A and I, and the booing began.

Schmidt paused. To his credit, he tried to engage with it. “There is a fear in your generation that the future has already been written,” he told the crowd, “that the machines are coming, that the jobs are evaporating, that the climate is breaking, that politics are fractured, and that you are inheriting a mess you did not create.” He called the fears rational, which they are, and asked the graduates to shape AI rather than be shaped by it. The boos intensified anyway.

A few days earlier, at the University of Central Florida, the commencement speaker, real-estate executive Gloria Caulfield, informed graduates that AI was “the next industrial revolution”. The video shows Caulfield pausing mid-sentence, raising her hands in surrender, and asking, with the calm of a substitute teacher addressing an unexpectedly hostile classroom: “Woah, what happened?”

What happened was that the AI conversation has been done at young people, not with them, for several years now, and the bill has come due.

Marcus Hutchins, the British security researcher who famously stopped the WannaCry ransomware attack in 2017 (and whom I interviewed for the New Statesman last year), smartly captured the dynamic on LinkedIn: “Everyone is sick of having AI shoved down their throat 24/7. Imagine spending years of your life and tens of thousands of dollars studying, then instead of celebrating your achievements, this asshole shows up to your graduation to talk about AI for 20 minutes. Wrong time, wrong place, and a complete inability to read the room. These people have spent years telling everyone AI is going to make their degrees worthless, them unemployable, and then as if that wasn’t enough, they show up to graduations to promote their AI products.”

Hutchins is right about the room, and correct about the bill. The graduates of 2026 are the first cohort to have completed an entire degree under the shadow of generative AI. They have spent four years being told, by the same industry now lecturing them on adaptation, that their qualifications will be worthless. They have watched their universities raise fees, and their potential employers freeze entry-level hiring. And they are now expected to applaud the person who built the asteroid – in Schmidt’s case, by accelerating Google’s AI research for two decades – for arriving on stage to explain it.

It is, in fairness to the speakers, a tonal challenge. The right time to tell a 22-year-old that the tools are exciting is somewhere between never and considerably earlier. By the time they have crossed the stage in a rented gown, the appropriate emotion is celebration, not reorientation. AI agnosticism, the disposition John Amaechi described on the IFOW stage on 18 May, requires the room to have at least some belief that the people offering it are on the room’s side. The Schmidts and Caulfields of the world have spent too long on the other side to be heard now.

The booing graduates, in this reading, are not anti-technology. They are anti-being-patronised by people who, in their view, have spent the last decade promising the impossible and delivering the disquieting.

The harder question is the one Hutchins’s post stops just short of answering. If not the inevitability narrative, then what? If not the well-meaning Schmidts and the off-key Caulfields, then who? The answer, in my own experience this month, is the one Fiona prescribed for the medical model: you build the skills by doing the thing, in increasing doses, under the eye of people who already do it.

I have been doing more of this myself than at any point in my career.

In early June, I will be at SXSW London, the festival’s second UK outing, which once again has AI threaded through almost every session. (I will report on that more fully in next month’s edition.) The week after, I will be a roving reporter, followed by a camera crew, for London Tech Week, interviewing keynote speakers as they come off the main stage at Olympia. Also that week, I am giving a keynote of my own for Ericsson on the collaboration required to take 5G to its next phase, which is a brief that demands rather more humility than I am naturally inclined towards. Additionally, I’ve been invited to audition for a TEDx talk on what I have been calling the feeds and the feels, an update to the birds-and-bees chat for parents whose children are about to inherit a smartphone.

None of this came naturally. Three-and-a-half years ago, when ChatGPT launched in November 2022 and the first stirrings of what I have since come to call FOBO (fear of becoming obsolete) set in, I was a journalist who largely avoided public speaking. The skills required to do what I will be doing this June are skills I have had to acquire, in public, by gaining experience.

The encouraging news, for anyone else considering the same leap, is that the discomfort lifts. The keynotes become easier. The video work becomes natural. The TEDx audition, which a year ago would have terrified me, now feels like a useful next stretch. None of this was on a SATs paper. None of it was on any qualification I ever held. All of it has come from putting myself, repeatedly, in situations where I was the least qualified person, and staying there until I was not. The thing is, venturing outside one’s comfort zone is as thrilling as it is rewarding.

This is, I suspect, what the booing graduates need to hear next, and from someone other than Eric Schmidt. The tools are real. The disruption is real. The case for agnosticism is real. But the skill that closes the gap between the test you have just sat and the labour market you are about to enter is the willingness to be bad at things in public for a little while. Nobody teaches it. Nobody can. You have to walk onto the stage yourself. Nobody else is going to do it for you.

The past

Walking onto the stage yourself, individually, is the lesson of this month’s interviewees. Walking onto the stage collectively is the longer lesson of the past 125 years.

International Workers’ Day, on 1 May, exists for two reasons. The first is to commemorate the 1886 Haymarket affair in Chicago, when a peaceful demonstration in support of the eight-hour day (a six-day week with 16-hour shifts was typical at the time) ended in a bomb, a trial, and a movement. The second is to remind us every year that workers’ rights are not features of the natural environment. They were fought for. They were resisted. They can be taken back.

We are not very good at noticing this in 2026. The cultural memory of organised resistance has thinned to the point where most British under-thirties associate trade unions chiefly with cancelled trains.

So I went, a couple of weeks ago, to see The Music is Black: A British Story at the V&A East, the first major exhibition at the museum’s new £135 million Stratford site. The show traces 125 years of Black British music, through 200 iconic objects – including Stormzy’s Union Jack stab vest from Glastonbury 2019, and Dame Shirley Bassey’s Goldfinger dress – and 120 tracks streamed through the visitor’s headphones, with the soundtrack changing depending on the user’s location in the exhibition.

Stormzy’s Banksy-designed stab vest from Glastonbury 2019

The exhibition is, on a single wall in the second room, devastating. It explains that British and other European empires used religion to extend control in colonised countries, sending missionaries to enforce a colonial reading of faith and producing new publications to normalise slavery, forcing millions of enslaved Africans to convert to a denomination of Christianity. This oppression, the wall continues, inspired songs of rebellion and hybrid faiths. From this emerged the gospel voice, which set the vocal standard for almost all of the contemporary music that followed.

In other words: the dominant sonic texture of the past 125 years of Western popular culture is, at root, the sound of people refusing to be silenced. Soul, ska, lovers’ rock, two-tone, jungle, garage, grime, drill. A continuous argument with the system, conducted in time signatures.

Acquiescence is the natural state of any system that has not been challenged recently. It is also the cheapest. Saying nothing costs nothing. Going along with the new rota, the new tool, the new restructure, or the new commencement speaker explaining the inevitability of one’s own redundancy requires no effort and produces no friction. This is why the default settles where it does. Not because the people imposing it are evil, but because objecting is genuinely tiring, and the rewards for objecting are diffuse, delayed, and frequently never collected by the objector.

The exhibition is a 125-year demonstration of the opposite. People who were never going to be reasonably accommodated, who had every economic incentive to keep their heads down, who would have benefited individually from going along, chose instead to argue with the system, in public, in song. The reward, in many cases, accrued to the children of the children of the people doing the arguing. Two-tone gave way to grime. Grime gave way to drill. The conversation continued. And the British identity those movements forced into existence is now, in plain and undeniable terms, ours.

The contemporary version of the question is whether anyone in any workplace, school, or commencement hall will do the equivalent for AI before it is fully imposed. The first stirrings are visible. The booing graduates of Arizona and Florida were not just expressing displeasure. They were, however ungracefully, refusing to grant the assent the speakers had clearly expected. It is a small thing. It is also the first move.

A still from a recent Thanks Flux it’s Friday video

I have been making a much smaller version of this argument, with steadily diminishing literary returns, every Friday morning since January, in a short video series called Thank Flux it’s Friday. The premise is that the more AI-generated content there is on LinkedIn, the more useful it is to put a (real) human face on the other side of the lens. Every week, I share a short human-work evolution story.

There is now a small but growing audience for it, which suggests, encouragingly, that the conch shell – William Golding’s, the one in Lord of the Flies that asks the boys to listen to each other – has not yet been lost. It just needs picking up, by somebody who is willing to insist on having a say.

Tech for good: Coracle

The opposite of an AI-celebrating commencement speech, both rhetorically and practically, is a battered Windows 10 laptop in a prison cell.

Earlier this month, I spoke to James Tweed, the founder of Coracle, a Cambridge-based company that now provides secure offline learning devices to more than 90% of the prison estate in England and Wales. James is a former shipping man, who chartered oil tankers in the 1990s, which is how the company acquired its nautical name. He returned to academic study in 2017 to write an MPhil thesis at Cambridge on what happens to people who lack internet access.

James Tweed, founder of Coracle Online

Prisoners, it turned out, were one of the few groups in British society who fit the description. They still are. There are around 87,000 of them. As James pointed out at a recent United Nations Office on Drugs and Crime expert group in Vienna, which helped produce the newly published UNODC/ICRC Handbook on the Use of Technology in Prison Settings (in which Coracle features prominently as best practice), they are about to be released into a country that is cashless, paperless, and incomprehensible to anyone who left it more than a decade ago.

“Today, everyone has mobile devices, everything is online, and the world is increasingly cashless and paperless,” James told the UN group. “Many people released from prison are totally unprepared for this. If we really want to cut reoffending, we need to ensure all of those released have basic digital skills so they can access work and housing and do all of the things most of us take for granted.”

The numbers are bleak. James points out that 40% of UK prisoners were permanently excluded from school. The national figure is 0.1%. For most of them, education is the place where life first went wrong. Put them in a classroom and they will play up, because performing in front of one’s peers is the worst possible setting in which to admit one cannot read. Put a laptop in front of them, in the privacy of their cell, and something else becomes possible.

Coracle’s platform runs adaptive AI in the background, lowering the text’s reading age when comprehension falters and refocusing the screen when the cursor begins to wander. The point of the technology is to remove the shame from the act of learning. James told me about a man he met at HMP Grendon, the country’s only therapeutic prison, who had been illiterate when sentenced as a teenager and had taught himself to read inside. By the time James met him, he was working towards an Open University degree. He now has a job at a Greene King pub, worked at Coracle for six months last year, and is, in James’s words, “the kind of person who is not going back to prison”.

The case for this, in the dryest possible language, is that every £1 spent on Coracle returns around £16 in reduced reoffending, according to a Crest Advisory evaluation last September. The prisoner education budget this year was cut by a third. The Treasury, in parallel, is finding the money to build new prison places in anticipation of a population that may reach 100,000 by the early 2030s. The arithmetic, on any rational measure, is the wrong way round.

James has also founded a separate charity, Rebooted, which recycles older laptops, installs Google Flex, and provides them to released prisoners and to the 300,000 British children who currently have a parent inside. “If we can break the cycle by keeping access to education alive,” he told me, “that is a significant thing we can do.”

Coracle is, in the most literal sense, a tech-for-good story: a piece of technology designed to fit the people, rather than the other way around. It is also a direct counterpoint to almost every theme of this month’s edition. The graduates Eric Schmidt addressed in Arizona were booing because the tools had been imposed on them. The prisoners James equips are quietly learning to use the tools in their cells because somebody finally remembered to ask. The difference, again, is consultation versus conscription. Coracle is on the right side of that line.

It is also, not coincidentally, doing the foundational mindset work Darius Norell complained nobody is funding. The reading age adjustment, the privacy of the cell, the chess game as a way in: all of it is designed to rebuild the disposition that British education first failed these people on. Responsibility, in James’s model, ends up in exactly the right lap. The prisoner does the work. The technology helps. The state, eventually, saves £16 for every £1 it puts in. Everyone benefits, with the possible exception of the people running the budget for new prison places.

This is what technology built for human flourishing actually looks like.

Statistics of the month

🧠 The organisational bottleneck

AI adoption is now constrained more by institutions than by individuals. Microsoft’s analysis of trillions of anonymised Microsoft 365 productivity signals, combined with a 20,000-worker survey across ten countries, finds that institutional factors (culture, manager support, talent practices) account for more than twice as much of AI’s impact as individual factors like mindset and behaviour (67% versus 32%). 58% of AI users now say they are producing work they could not have a year ago, rising to 80% among Frontier Professionals. The skills are arriving. The systems are not. (Microsoft, 2026 Work Trend Index)

😟 Fear is winning

Seven in ten of the UK public are worried about the economic impacts of AI. Six in ten think it will eliminate more jobs than it creates. Half think its impact will be worse than a normal recession. One in five think it will cause civil unrest. Nearly six in ten agree with Anthropic CEO Dario Amodei’s prediction that AI could eliminate half of all entry-level white-collar jobs within five years. The booing graduates of Arizona and Florida, in other words, are not statistical outliers. (King’s College London Institute for AI / Policy Institute, AI and the Future of Work)

💷 Tax the robots

Almost half of Britons (47%) would support a tax on work done by AI rather than humans, against just 20% opposed. Labour, Lib Dem and Green voters are most enthusiastic (55-58% in favour), with Tories not far behind (38% versus 27% opposed). Even Reform voters are net positive, narrowly. The idea, raised most recently by a British tech entrepreneur to the BBC and previously by Sam Altman and Bill Gates, was once fringe. It is now mainstream public opinion. (YouGov, How do Britons think AI will impact the UK?)

📱 Bring your own AI

Some 53% of UK workers are now using personal AI tools to do their jobs, rising to 75% among 25- to 34-year-olds. In the absence of fit-for-purpose enterprise systems, employees are quietly building their own. WalkMe’s separate State of Digital Adoption 2026 report puts the global cost of bad workplace tech at 51 lost days per worker per year, the work-equivalent of a third of an average annual leave allowance disappearing into the IT helpdesk queue. (Kantar for NTT DATA Business Solutions and WalkMe / WalkMe State of Digital Adoption 2026)

⚽ AI in the dugout, instinct in the box

A survey of 1,000 UK business executives commissioned by Hemsley Fraser finds that 55% would back AI playing a central role in World Cup tactical decisions, and 47% would trust an AI-guided manager over one leading on instinct alone, more than twice the 21% who would back instinct. But 62% still believe strong leadership matters more than data, and 59% say instinct and gut feel beat data in the knockout stages. (Hemsley Fraser)

If you’re reading this – thank you – and haven’t yet subscribed, you can sign up for Go Flux Yourself (there should be a pop-up). Please feel free to share it with friends and colleagues, too. Each edition lands on the last day of the month.

Get in touch: oliver@pickup.media. I write, speak, and strategise on the future of work, AI, and human capability. For speaking enquiries, contact Pomona Partners.