TL;DR: April’s Go Flux Yourself celebrates World Autism Acceptance Month and examines why the economy is being rewired to reward pattern recognition, analytical depth and creative problem-solving while simultaneously locking out the population that does those things best.

Image created using Luma’s Uni-1

The future

“The disorder framing says: something is wrong with you. Fix it. Suppress it. Medicate it away. The superpower framing says: actually you’re special! Lucky you! Neither is useful. Neither is honest. Neither asks the more important question, which is: ‘What does this person need to understand about how they function?'”

I want to start with a confession. I ended contractual work at newspapers and established Pickup Media Limited well over a decade ago. While I have constructed various respectable-sounding explanations for that decision over the years, the honest version is simpler: I am just not very good at working in offices or with other people’s agendas.

I love collaborating, and I’m lucky to have a roster of fun clients, most of them longstanding, across a range of industries. But working on my own terms, with flexibility, in my own rhythm, knowing my value, and organising my days around how my brain actually functions has been the difference between surviving and thriving. I mention this not because I am claiming any particular neurological distinction, but because the older I become, the more I notice how many of the most capable people I know have quietly arranged their working lives around similar principles, whether or not they have a diagnosis to explain why.

I should also say clearly: I am in no way an expert on neurodiversity. I am a journalist. What follows in this month’s Go Flux Yourself is the product of research, interviews, and reporting, not clinical authority or lived experience. But the story I found when I started pulling at this thread is so striking, and so relevant to the questions this newsletter returns to every month – who benefits from the way we organise work, who loses, and what happens when we get it wrong? – that it felt irresponsible not to write about it. If I have got anything wrong, I would genuinely like to hear about it.

Here, then, is the story. Something peculiar is happening in the global labour market. The skills that employers say they most urgently need – pattern recognition, analytical depth, sustained focus, creative problem-solving, the capacity to hold a complex system in your head and spot the fault line nobody else can see – are the cognitive traits that many neurodivergent people demonstrate as their default setting.

Can you imagine doing a job that perfectly suits you? Not tolerably, but properly: one that uses the way your particular brain works as an asset rather than an inconvenience. Over three years ago, I interviewed Professor Erik Brynjolfsson for Raconteur. Brynjolfsson directs the Digital Economy Lab at the Stanford Institute for Human-Centered AI and is arguably the world’s leading authority on the relationship between digital technology and productivity. His argument was bracingly simple and remains, in 2026, largely unaddressed. “Human capital is a $200 trillion asset in the US, bigger than all the other assets put together, and about 10 times the country’s gross domestic product,” he told me in early 2023. “The most important asset on the planet is the one we’ve been measuring the worst.” Three years and an AI revolution later, there is no evidence that the measurement has improved. The consequence? Human capital is “probably the most misallocated asset on the planet. Businesses are not putting the right people in the right jobs.”

Consider what that means. Not just at the macro level, where the numbers are so large they become abstract, but at the human level. Brynjolfsson put it plainly: “Think of how many people are not in the right job, living lives of quiet desperation. They probably have some capabilities that could fulfil another job much better, but they’re not being matched to it because the infrastructure is not there.”

Around five years ago, he and his Stanford colleagues started building a platform called work2vec, which used data from 200 million online job postings to map the distance between skills, roles and people in a multidimensional space, making it possible to see how close an electrician is to a fibreoptics engineer, or a data scientist to a machine learning specialist. If you can see the adjacencies, you can redesign the pathways. For instance, if you need a fibre optics technician and you can see that electricians share 85% of the required skills, you train the gap rather than advertising for a unicorn.

In 2026, the matching infrastructure Brynjolfsson called for is still largely missing. The World Economic Forum’s January 2026 scenario analysis suggests that the variable that determines whether AI augments or displaces us is not the technology. It is whether we invest in the people who use it.

The WEF’s Future of Jobs Report 2025, surveying over 1,000 employers representing 14 million workers, found that analytical thinking remains the top core skill, with seven out of ten companies considering it essential, followed by resilience, creative thinking, and curiosity. Demand for AI literacy skills increased by 70% in a single year. And 39% of all skills required in the job market are expected to change by 2030.

Brynjolfsson was talking about the entire labour market. But there is one population where the misallocation is so severe, so well documented, and so absurdly at odds with what the economy actually needs, that it amounts to a case study in institutional self-sabotage.

In the 2024/25 financial year, just 34% of disabled people with autism – a form of neurodivergence – in the UK were in employment, compared with 82% of non-disabled adults. That figure has improved over time, from roughly 15% in full-time work (the National Autistic Society’s long-standing survey figure), to 22% when the ONS first measured it formally in 2020, to 30% at the time of the Buckland Review in 2024, and to 34% now. A recent National Autistic Society report found that 77% of unemployed autistic people want to work.

This is not a population that has opted out of employment. Rather, it is a segment that has been designed out, by application forms that penalise unconventional communication, interview processes that assess social performance rather than professional capability, and office environments built for a cognitive profile that represents, generously, 80% of the population. It is rather like designing a restaurant that only serves right-handed diners and then wondering why 15% of the population never books a table.

Autistic graduates, according to the Buckland Review, are twice as likely to be unemployed 15 months after university as non-disabled graduates. Those who do find work are the most likely of any group to be overqualified, the most likely to be on zero-hour contracts, and the least likely to be in a permanent role. If you deliberately set out to take some of the most analytically capable minds in the labour market and make them grateful for insecure work beneath their qualifications, you would struggle to design a more efficient system than the one we have now.

Indeed, in cybersecurity – an industry where the ISC2’s 2024 Workforce Study found 5.5 million people active globally against a gap of 4.8 million unfilled positions, meaning the workforce needs to grow by 87% to meet current demand – 19% of UK professionals already self-identify as neurodivergent, and NeuroCyberUK estimates up to three quarters of cognitively-able autistic adults could possess the aptitude for the field. We have a talent emergency the size of Ireland in cybersecurity alone, and a largely untapped population whose brains are wired for exactly the work that needs to be done. The fact that these two things have not been connected at scale is Brynjolfsson’s “most misallocated asset” argument in miniature.

April is World Autism Acceptance Month, the prompt for this edition of Go Flux Yourself. The opening quotation for this edition comes from Ben Branson, founder of Seedlip, the world’s first distilled non-alcoholic spirit, and more recently of The Hidden 20%, an award-winning neurodiversity charity, chart-topping podcast and community of over 250,000 people.

Ben spent 39 years not knowing his brain worked differently. In those years, he built Seedlip – which began with a 17th-century book on herbal remedies, The Art of Distillation by John French, and a copper still bought online – from his kitchen in the Chilterns to 35 countries and 7,500 venues, sold a majority stake to Diageo, and pioneered a category now worth billions.

He also experienced addiction, homelessness, institutionalisation and childhood bullying so severe that he was seeing a psychiatrist at seven. I asked him how much of Seedlip’s success was his autism working for him, and how much was it destroying him. “Both,” he said. “Simultaneously. The whole time. The pattern recognition, the obsessive research, finding a book from 1651 and seeing a business nobody else could see, the sensory acuity: smell, taste, memory. One part of my brain was building something. The other was quietly unravelling.”

Image provided by Ben Branson

His diagnoses, for autism and Attention Deficit Hyperactivity Disorder (ADHD), arrived at 39. I asked what first made sense. I expected something structural, a relationship pattern perhaps, or a career decision. Instead: “The hearing. I’ve always had extraordinary hearing. I can listen to a TV on volume one. Then there’s the skin. Wool hurts me. Labels in clothing. I’d spent 39 years thinking that was just me being fussy.” Then, more quietly: “Before: I was broken. After: I had a different operating system.”

The Hidden 20%’s position is deliberately, almost stubbornly, precise: neurodivergence is neither a superpower nor a disease. “Both sides are lazy,” he said. “The disorder camp produces terrible statistics, not because neurodivergent people are broken, but because the system was built for a brain it doesn’t include. The superpower camp produces something almost as damaging: a toxic positivity where suffering gets minimised, and anyone who isn’t thriving is somehow doing neurodivergence wrong.” More plainly: “My brain has given me everything I’ve built. It’s also put me in hospital. The goal isn’t the silver lining. The goal is the truth.”

March 2025’s Go Flux Yourself explored the “Lost Einsteins”: economist Raj Chetty’s term for those with the potential to innovate but without the credentials, connections or capital to do so. Neurodivergent people are a particularly visible subset of those Lost Einsteins. Indeed, over 20 years ago, researchers at Cambridge, led by Sir Simon Baron-Cohen (Sacha Baron-Cohen’s cousin), assessed both Sir Isaac Newton and Albert Einstein as “fairly certain” to have been on the autism spectrum. The minds that changed the world, from Newton to Turing to Tesla, were rarely the ones that fitted the systems designed to assess them (more on this, and on Brynjolfsson’s concept of “the Turing Trap,” in The past, below).

I wrote last year for the New Statesman about teenage hackers and the pipeline from bedroom gaming to cybercrime. Holly Foxcroft, a neurodivergent cybersecurity specialist at OneAdvanced, told me that autistic minds are “analytical and data-driven, spotting patterns others miss”, and that neurodivergent teenagers often lack dopamine, making hacking “basically a puzzle with a reward at the end: social acceptance otherwise lacking in the physical world”. These teenagers are not short on talent. They are short on legitimate education and (legal) work pathways. And when you brick up the front door, you should not be entirely surprised when people start climbing through the window.

Caroline Cavanagh, a hypnotherapist, speaker and anxiety specialist based in Wiltshire – and, like me, represented by Pomona Partners for speaking – put it to me with rather less academic caution. “You are missing out on the Mozarts of their time, the Turings of their time. You are missing out on having this employee in your business.” One of her current clients, a severely autistic young man, spent most of secondary school at home because his school could not cope. His talent proved exceptional: video and animation of a quality most professional studios would envy. He is now making a documentary about why employers should be more aware of neurodivergent needs, which is either deeply inspiring or deeply damning, depending on how long you sit with it. “All of our systems were designed at times when neurodiversity wasn’t acknowledged,” Caroline told me. “If you weren’t within the typical, you either had to bend and cut your raw edges off to fit in, or you were excluded.”

The problem starts long before anyone reaches a workplace. A 2023 report by the National Autistic Society found that only 39% of primary teachers and 14% of secondary teachers surveyed had received more than half a day of autism-relevant training in the course of their careers, and one in seven children is estimated to be neurodivergent, according to the government’s latest estimate. Before anyone reaches a clinician, there is the queue: as of December 2025, 254,108 people in England were waiting for an autism assessment, more than the population of Southampton.

Ben was blunter: “If someone reads a resource on our website and it’s the only support they’ve had in five years, something has gone catastrophically wrong upstream of us.”

Some pioneering, supportive companies are producing results that make the inaction of others look increasingly peculiar. For instance, JPMorgan Chase’s Autism at Work programme now employs over 150 people on the spectrum across nine countries with a 99% retention rate. Auticon, the global IT consultancy where every consultant is on the autism spectrum, does not accommodate autism so much as it is engineered around it. Microsoft has run a Neurodiversity Hiring Program since 2015, replacing traditional interviews with work trials, a move so obviously sensible you wonder why it took a trillion-dollar company to think of it.

But the argument is no longer just about inclusion: it is about competitive advantage in an AI-driven economy. Josh Hough, founder of home care software firm CareLineLive, put it well during Neurodiversity Celebration Week, in March: as AI reshapes the workplace, traits often linked to neurodivergence – focus, pattern recognition, problem-solving – are becoming more valuable, not less. “A lot of businesses still want people who tick every box,” he said. “The reality is, people who think differently often solve problems differently. You need people who don’t just follow a process, but can see a better way of doing things.” In an economy that automates the routine and rewards the lateral, the neurodivergent mind is not a risk to be managed but an advantage. So why do so few companies have formal neuroinclusion policies in place? And what actually needs to change?

Both Ben and Caroline are specific in ways most corporate neurodiversity initiatives are not, largely because they work with actual humans rather than advisory boards. Ben ran a no-internal-email policy at Seedlip from day one, routing everything through Slack, not as a quirk but because his brain needs segmentation and clarity to function, and it turned out to make the whole company faster besides. His advice to the CEO who reads his story and thinks it sounds admirable but irrelevant: “You already have the answer. You just haven’t asked the right question. You’ve been told ‘get different voices in the room’. You nod. You hire a diverse panel. You put it in the deck. But have you ever asked ‘do we have a diverse mix of brains in here?’”

The people companies are losing – the undiagnosed, the mismanaged, the ones quietly burning out in environments designed for a cognitive profile they do not share – are, in Ben’s view, “probably your best problem-solvers. You just haven’t built the conditions for them to show you.”

Caroline told me about a woman she works with who was ready to leave a job she was excelling at, for no other reason than that nobody had ever told her she was doing it well. Not a pay dispute, not a culture clash, not a better offer elsewhere. Just silence where a sentence would have been enough. “It might just be a positive email, ‘well done today’,” Caroline said. “With that tiny investment, you can get such a phenomenal return.” This is not indulgence. It is calibration, the kind of management attentiveness good bosses already apply to their strongest performers without ever calling it an accommodation.

Kay Sargent, a workplace design specialist I spoke to earlier this year, extends the argument to the physical environment: workplaces should offer a spectrum of sensory experiences rather than standardising for a single cognitive norm. “Over 50% of 20-year-olds consider themselves to be neurodivergent,” she told me. “That is no longer a neurominority.”

The Buckland Review described autistic people as “an untapped workforce” and made recommendations across five areas. An independent panel led by Professor Amanda Kirby was appointed in January 2025 to improve job prospects for neurodivergent people. Their report was due last summer. The silence since has been conspicuous, though perhaps not surprising from a system that has managed to build a 254,000-person assessment waiting list without apparently regarding it as urgent.

Ben’s daughter River has been diagnosed autistic. Nine generations of Bransons have worked in agriculture and invention; his great-grandfather was knighted for services to both. “Is neurodivergence the thread?” he said. “I find it very hard to believe it isn’t. My great-grandfather was knighted for what his brain produced. His great-great-granddaughter has been diagnosed autistic. They almost certainly share something fundamental. We just finally have the word for it.”

When I asked what he is doing differently for River, he said: “She already knows. She has the language. She won’t spend 39 years gathering questions without answers.”

Brynjolfsson told me in 2023 that the next decade could be “the best we’ve ever had on this planet,” provided we close the gap between technological capability and our human response to it. Three years on, that gap is wider. For the 34% of autistic adults in work, and the 77% who want to be, and the quarter of a million people waiting for a diagnosis that might open a door the system should never have closed, Brynjolfsson’s optimism remains theoretical.

I asked Ben, finally, what he would tell the 25-year-old version of himself about his brain. His answer was six words: “It’s not chaos. It’s a pattern.”

The pattern is there. The talent is there. What remains is for UK employers to do something as simple, and apparently as difficult, as asking the right question about the brains already inside their buildings.

The present

This last month, I’ve been in various rooms where the arguments in this newsletter played out in real time, with real people.

For instance, on 15 April, I attended a session at the Houses of Parliament hosted by the All-Party Parliamentary Group on the Future of Work, chaired by Lord Knight of Weymouth and organised by the Institute for the Future of Work. The topic was technological disruption and its impact on young people entering the labour market. The panellists included Fiona Aldridge, chief executive of the Skills Federation and member of the Skills England board, and Darius Norell, founder of Radical Employability (I followed up with both, and their words of wisdom about how to better prepare and serve youngsters for more successful careers will appear in May’s Go Flux Yourself).

Fiona framed the paradox neatly, paraphrasing Bill Gates’ line: we are probably overestimating the short-term impact of AI on youth employment and underestimating the long-term impact. Much of what is currently being attributed to AI is actually being driven by economic conditions and businesses’ technology choices in response to them. But the long game is far less comfortable. Her financial services members found that only between 0.5% and 1.5% of the workforce will ever need to be real AI specialists, rather surprisingly. The rest will need to work alongside AI, which is a fundamentally different proposition and one that the education system is not yet configured to deliver.

David Hughes, CEO at Association of Colleges, was blunter in the IFOW session. The knowledge-rich curriculum to age 16 trains young people to pass tests, not to learn for life, he argued. The skills employers consistently say they want – confidence, problem-solving, working with others, the belief that you can work through a challenge – he called “middle-class skills”, because most middle-class kids absorb them at home. This hit me hard, as a middle-class father to two school-age children, each of whom has access to and enjoys a raft of extra-curricular activities, ranging from sport to scouts to drama and dancing.

Many others do not, and the education system does not compensate. Some 40% of free-school-meals children leave school without good GCSEs in English and maths, Hughes added. And there are nine million adults in the UK with poor literacy. If you cannot read or write confidently, your chances of adapting to technological change are slim.

The most affecting contribution came from Deborah, a King’s Trust Young Ambassador who is neurodivergent and has ADHD. She described doing everything the system asks of her: upskilling, posting on LinkedIn, attending networking events, and completing courses. Then … nothing. “A lot of the young people I’ve spoken to feel like they’ve been led to a cliff.” The system invests in getting people to the edge and then stops.

Lord Knight identified the absurdity that lies beneath much of this: candidates using AI to optimise their applications, employers using AI to filter them, everyone applying in the analogue way, yet with AI on both sides of the process. Nobody in the room could explain why this was better than an AI-native, skills-portfolio-based approach where people are matched to roles on the basis of what they can actually do. Brynjolfsson’s work2vec, in other words, is not just a Stanford research project. It is the answer to a question many are asking.

Image created using Luma’s Uni-1

A week later, on April 22, I was at Olympia London, moderating a panel at Data Decoded called “Creating a data-led culture: the barriers and how to break them”, with Jason Foster (CEO of Cynozure), Matt Yates (Head of Talent Acquisition EMEA at Uber), Sam Davies (Director of Global Product Insight & Analytics for Comcast/Sky), and Kam Karaji (Director of Cybersecurity and Risk Management at the NFL, and holder of a Queen’s Gallantry Medal for counter-terrorism).

The main thrust of the session was that organisations are drowning in data yet starved for wisdom, and the barrier is not the technology: it is the culture. You can spend millions on dashboards, but if nobody in the room trusts the numbers enough to act on them, or is brave enough to say “I don’t know what this means” then the dashboards are (expensive) furniture. Meanwhile, the people most fluent in these tools, the ones who grew up with AI in their pockets, are the generation having the door shut on them. Entry-level roles are being hollowed out across sectors, and with them, the apprenticeship layer where mid-level judgment used to be built.

Image created using Luma’s Uni-1

The day before Data Decoded, I moderated a CX Divide breakfast roundtable for Moneypenny at the Ivy’s Granary Square Brasserie in King’s Cross, alongside 13 senior customer experience leaders – and I wrote about it here. Moneypenny had surveyed 2,000 UK business decision-makers and 5,001 consumers on the same questions, and the perception gap is extraordinary: on social media, businesses rated their own performance 36 percentage points higher than customers did. On web forms, 32 points. On chatbots, 28. Businesses think they are nailing it. Customers know otherwise. The gap between what organisations believe they deliver and what people actually receive is, I suspect, the CX equivalent of the neurodiversity employment gap: a system that measures its own performance by criteria the people inside it never agreed to.

Every room I was in this month, from the House of Lords to Olympia to the Ivy in King’s Cross, asked the same question: are we building systems for the people we actually have, or for the people we assume?

The past

In 1950, famed mathematician and codebreaker Alan Turing proposed what he called an “imitation game”: could a machine imitate a human so convincingly that an observer could not tell the difference? The question launched an entire field (and there is even a 2014 film with the same name about him). It also, inadvertently, set a trap for technology innovators.

Erik Brynjolfsson, the Stanford economist I quoted earlier in this edition, has written extensively about what he calls “the Turing Trap”. His argument is elegant and uncomfortable. When AI is focused on replicating human capabilities (passing the Turing Test, in other words), it creates machines that substitute for human labour. Workers lose bargaining power. Value concentrates. The people who control the technology become richer. The people the technology replaces become poorer.

However, when AI is focused on augmenting human capabilities – doing things humans cannot do alone, rather than mimicking what they already can – then humans remain indispensable. Complementarity, not substitution, is the path to shared prosperity.

The trap, then, is this: our instinct is to build machines in our own image, because imitation is how we measure intelligence. But the more successfully we do that, the more we make human workers replaceable. The goal, Brynjolfsson argues, should not be human-like AI but human-complementary AI. Not a machine that thinks like us, but a machine that thinks differently from us, so that together we can do more than either could alone.

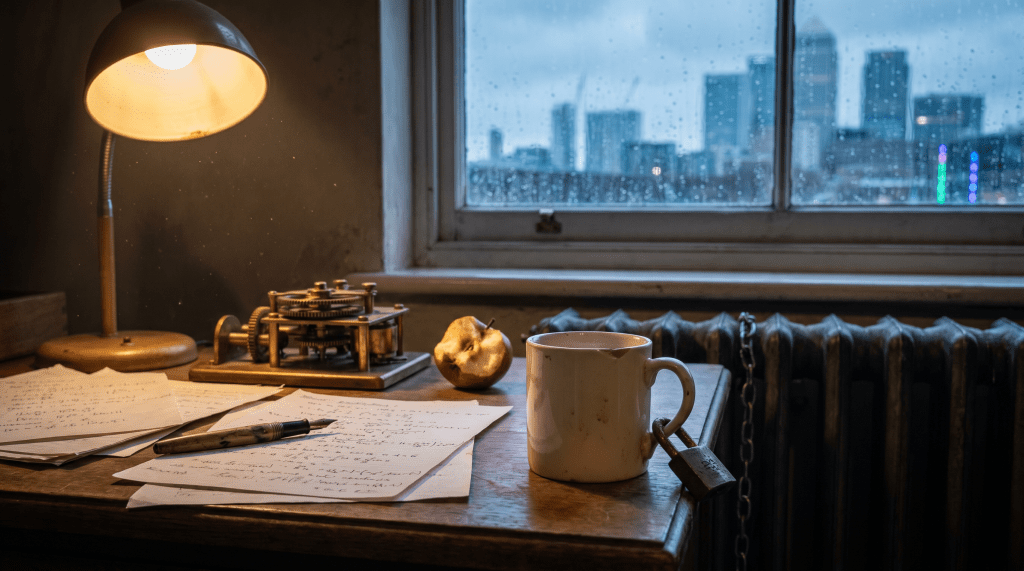

Image created using Luma’s Uni-1

The irony is exquisite. The man who set the original test (can a machine pass for a human?) was himself a mind that the human system could barely accommodate. Turing was highly literal, socially unconventional, obsessively focused, and thought in patterns so alien to his contemporaries that his 1936 paper on computability was not widely understood for years. His colleagues at Bletchley Park found him brilliant but baffling. He chained his tea mug to a radiator to stop people borrowing it, cycled to work wearing a gas mask to avoid hay fever, and was, by every informed modern assessment, almost certainly autistic.

He was also prosecuted for homosexuality in 1952, chemically castrated, and died two years later at the age of 41. The system he saved could not tolerate the mind that saved it.

Turing is the most striking example, but he is not alone. Isaac Newton spent days without eating when absorbed in a problem, had virtually no close relationships, and was so socially withdrawn that his lectures at Cambridge were often delivered to an empty room. Henry Cavendish, who discovered hydrogen and measured the density of the Earth with extraordinary precision, was so averse to human contact that he communicated with his servants by letter. Oliver Sacks wrote that Cavendish’s biography “constitutes perhaps the fullest account we shall ever have of the life and mind of a unique autistic genius”.

Elsewhere, Charles Darwin was a solitary, anxious child who preferred long walks and letter-writing to social interaction; Michael Fitzgerald at Trinity College Dublin published research concluding he had Asperger’s syndrome. Nikola Tesla, whose alternating-current motor powers the world, had an obsessive need for numerical patterns (he would circle a building three times before entering), extreme sensory sensitivity, and virtually no capacity for casual social engagement.

What connects them is not just that they were brilliant. It is that their brilliance was inseparable from the way their brains worked, the traits that made them difficult to employ, difficult to manage, and difficult to assess by conventional means. Newton’s ability to focus for days without interruption was not a personal quirk. It was the cognitive engine that produced the Principia. Tesla’s compulsive pattern-seeking was not a disorder. It was the source of his inventions. And Turing’s literalism and unconventional thinking were not social deficits but the attributes that enabled him to conceive of a machine that could think.

We tend to celebrate these minds in retrospect while systematically excluding their contemporary equivalents. Newton gets a statue; the autistic physics graduate gets a zero-hour contract. Turing gets a posthumous pardon; the neurodivergent teenager gets a 17-month wait for a diagnosis.

Brynjolfsson’s Turing Trap offers a way of thinking about why. If the default instinct is to measure intelligence by how closely it resembles the norm (the imitation game), then any mind that deviates from the norm will be penalised, regardless of what it can do. The trap is not just economic: it is cognitive. We have built assessment, education, and employment systems around the assumption that intelligence looks a particular way: fluent, sociable, compliant, generalist. The minds that break through are the ones lucky enough, or stubborn enough, to route around the system entirely.

Ben Branson built Seedlip from his kitchen because no employer would have known what to do with him. Turing broke the Enigma code because Bletchley Park was desperate enough to overlook his eccentricities. Newton produced his greatest work during the plague, when Cambridge shut and he was left alone. So far, civilisation’s most reliable method of supporting neurodivergent genius has been to accidentally leave it alone. If I were a teacher marking some work, I would probably write “room for improvement”.

Tech for good: Liverpool City Region Combined Authority

It is one thing to talk about AI serving people. It is another to be handed health, transport and education for 1.6 million residents and told to make it happen.

Tiffany St James is the Chief AI Officer for the Liverpool City Region Combined Authority, the UK’s first regional public sector CAIO, a role she took up in September 2025. I spoke to her for a recent episode of DTX Unplugged, the podcast I co-host. She clearly articulated the gap between AI enthusiasm and AI usefulness.

“Almost everyone starts with the problems,” she said. “What are the wicked problems? How can I fix them? It’s not bad doctrine, but I like to start with maturity. Where are we now? Where are we trying to get to?”

That sequencing matters because Liverpool is not starting from scratch. The region has over 450 kilometres of high-fibre infrastructure enabling 5G, a supercomputer at STFC Hartree offering affordable slices of compute to smaller businesses, and undersea cables running from the US and Ireland into Southport. The building blocks are there. What was missing was someone to connect them to outcomes that matter to people’s lives.

Image of Tiffany taken from DTX Manchester in April

One programme Tiffany inherited and is now expanding puts assistive learning technology into primary schools, currently 10% of the region’s primaries, focused on the critical Year 6 transition before secondary school, offering personalised support in science, maths and English. “We can’t fund this in perpetuity,” she said. “But what we can do is help our teachers have exposure to different tools to enable them to be more critical consumers of the technology on offer.”

What makes her approach distinctive is the insistence on people before technology. “What I see time and time again is organisations leaning into technology first, and that just amplifies good and bad culture and processes. The pace of change of people, their skills, their infrastructure, their confidence, is mismatched with the pace of technology.”

That principle was forged under pressure. In June 2017, Tiffany was called into Gold Command to run digital communications during the Grenfell Tower response. From that experience, she developed what she calls a “single-line strategy”: one sentence that gives a team the clarity to say no to distractions and yes to what matters. For Liverpool’s AI programme, the strategic touchstone is: We are here to help better outcomes for residents, citizens and visitors, enabled by AI.

Liverpool has also produced a resident-led Data and AI Charter, developed through a Civic Data Cooperative, with 11 principles setting out how the region’s residents want their data to be used. It has been recognised by central government as a model of best practice.

“If you say, ‘Please give me your data,’ they’ll say no,” Tiffany told me. “But if you say, ‘We could understand if your house was at risk of fire and get the fire brigade to you faster,’ they’ll say yes.”

This is what AI for good looks like when someone actually has to deliver it: a maturity assessment, a single-line strategy, a resident charter, and the honesty to admit you cannot fund everything in perpetuity.

Statistics of the month

🧠 The engagement slump

Global employee engagement fell to 20% in 2025, its lowest level since 2020 (remember what happened that year?), marking the first time Gallup has recorded two consecutive years of decline. Low engagement costs the world economy an estimated $10 trillion in lost productivity, equivalent to 9% of global GDP. Europe reports the lowest regional engagement at just 12%. (Gallup, State of the Global Workplace 2026)

📉 The manager crisis

Manager engagement dropped from 31% in 2022 to 22% in 2025, accounting for most of the wider engagement decline, according to the same Gallup report. Non-manager engagement has stayed roughly flat. In best-practice organisations, 79% of managers are engaged, nearly four times the global average. The technology works. The management doesn’t. (Gallup, State of the Global Workplace 2026)

🔐 The shadow AI blind spot

Two-thirds of UK organisations do not know what data is being shared with AI tools. A third admit employees are sharing data through external, unsanctioned tools. When 44% of UK workers have used unapproved AI in the past 30 days, and 39% have done so with confidential data, the question is no longer whether shadow AI is a problem. It is whether anyone is looking. (SailPoint)

🏙️ London’s AI exposure

More than a million Londoners are in jobs facing major change from AI, roughly one in five. The exposure is heaviest in the same entry-level and administrative roles that the IFOW session at Parliament identified as already being hollowed out. The future is arriving fastest for the people least prepared for it. (Mayor of London / GLA Economics)

🏥 The wellbeing strategy gap

Some 43% of UK companies do not have a formal health and wellbeing strategy in place. For 18%, simply offering benefits is the strategy. A further 13% offer support on an ad hoc basis. In a labour market where engagement has hit a five-year low, nearly half of UK employers are, to use a technical term, winging it. (Everywhen)

If you’re reading this – thank you – and haven’t yet subscribed, you can sign up for Go Flux Yourself (there should be a pop-up). Please feel free to share it with friends and colleagues, too. Each edition lands on the last day of the month.

Get in touch: oliver@pickup.media. I write, speak, and strategise on the future of work, AI, and human capability. For speaking enquiries, contact Pomona Partners.